National Park Web Scraping

Posted by Wann-Jiun Ma

Updated: Dec 21, 2016

Contributed by Wann-Jiun Ma. He is currently attending the NYC Data Science Academy Online Data Science Bootcamp program. This post is based on his third class project - Web Scraping.

Contributed by Wann-Jiun Ma. He is currently attending the NYC Data Science Academy Online Data Science Bootcamp program. This post is based on his third class project - Web Scraping.

Introduction

We are planning a trip to national parks. With so many adventures to choose from, I thought it would be a good idea to scrap national park information from websites and use the scraped data to build a national park recommendation system for myself. The idea is pretty simple: 1) scraping national park features from websites; 2) EDA & data wrangling 3) building models based on the scraped data 4) evaluating and enjoying the results. I use both Scrapy and Beautiful Soup to scrape park information from websites. The websites that I scrape information from are Wikipedia and TripAdvisor. All codes can be found at https://github.com/Wann-Jiun/nycdsa_project_3_web_scraping.

Wikipedia Web Scraping Using Scrapy

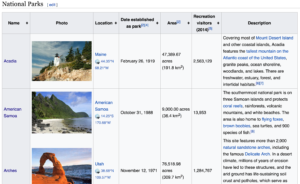

First, let's have an overview of the parks in US. I use Scrapy to scrape park information from Wikipedia. Scrapy is a web scraping framework, written in Python. A Scrapy project is built around ‘spiders’, which are self-contained crawlers. The crawlers will follow a set of instructions to scrape information from websites. The information that I scrape from Wikipedia consists of park name, location, date built, park size, number of visitors (2014). After scraping the data, I perform some data wrangling for EDA at the next stage of analysis.

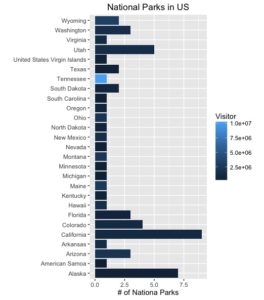

Let's see which state has the most national parks. I group by the state information and plot the result. The figure shows that California has the most national parks (9). I guess it's not surprising. Let's see if we can find any interesting facts from the data. I also count the mean of the total number of visitors in each state. The figure shows that Tennessee has the most visitors in 2014. It's very interesting since there is only one national park (Great Smoky Mountains) in Tennessee.

TripAdvisor Web Scraping Using Beautiful Soup

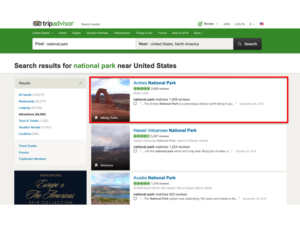

Now, let's consider scraping more information about national parks. I use Beautiful Soup to scrape date from TripAdvisor. Beautiful Soup is a Pyhton package designed for web scraping and easy to use. The information I scrape includes park name, review star, number of reviews, location, park feature (hiking trails, valleys, volcanoes, etc.), url links.

Note that the url links are scraped based on the "GET" request information, which is provided by web browsers. Based on the url links, we can also go to each individual park's web site to scrap more information. The following figure shows the information I scrape from each individual park's website.

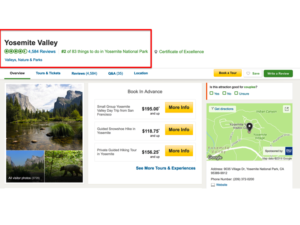

Note that the url links are scraped based on the "GET" request information, which is provided by web browsers. Based on the url links, we can also go to each individual park's web site to scrap more information. The following figure shows the information I scrape from each individual park's website.

Finally, I collect park features including name, number of reviews, review star, location, park feature, # of things to do. The nominal categorical data including park feature and location (state) are coded using Pandas' "get_dummies" function.

Finally, I collect park features including name, number of reviews, review star, location, park feature, # of things to do. The nominal categorical data including park feature and location (state) are coded using Pandas' "get_dummies" function.

The features are fed into the k-means clustering algorithm to explore the underlying structure of the park data. k-means clustering aims to partition observations into k clusters in which each observation belongs to the cluster with the nearest mean and the closest similarity. Using k-means clustering, we are able to recommend similar parks to user based on the input that the user provides.

Wann-Jiun Ma

Wann-Jiun Ma (PhD Electrical Engineering) is a Postdoctoral Associate at Duke University. His research is focused on mathematical modeling, algorithm design, and software/experiment implementation for large-scale systems such as wireless sensor networks and energy analytics. After having exposed to extensive training and confronted many challenges during his doctoral and postdoctoral study of mathematical/statistical analysis and programming languages, he has become an experienced and well-trained researcher in data science, which will serve him essentially well as he moves forward to the next stage of his career and turns himself into a data scientist in industry. https://www.linkedin.com/in/wannjiun

View all articlesTopics from this blog: data science clustering python NYC Open Data Web Scraping Scrapy Student Works